The Vist Multi-Agent Memory Layer

It sounds like it needs an acronym: V-MAML! Or maybe not.

The recent chaos in the AI coding assistant world—the rise of “Clawdbot,” the fall of “Moltbot,” and the endless “OpenClaw” speculation—got me thinking. Everyone is scrambling to build “memory-aware” coding tools. They’re trying to give the AI a persistent brain so it doesn’t forget who you are or what you’re building every time you close the terminal.

And I realized: Vist is already a memory engine. It stores your thoughts, your tasks, your project context. It’s a context layer for the human in the loop. Why not make it the context layer for the legion of agents helping that human, too?

So, over the weekend, I stopped brainstorming with Claude and started building with him. The result is the new Multi-Agent Memory Layer.

The “Shared Brain” for Agents

If you’re using Vist via the MCP (Model Context Protocol) server, your AI agents can now do more than just read your notes. They can maintain their own persistent state inside Vist, alongside your human notes.

I’ve introduced a structured memory system that allows agents to read and write specific types of context:

Project State: What are we building? what’s the current phase?

Decision Log: Why did we choose ViewComponent over partials? (So the next agent doesn’t ask me again).

Preferences: “User hates excessively cheerful robotic responses.”

Active Context: What was I working on before I went to get coffee?

This isn’t just a text dump. It’s a structured synchronization protocol. Agents can request a “delta sync” to get only what changed since their last interaction, keeping token usage low and relevance high.

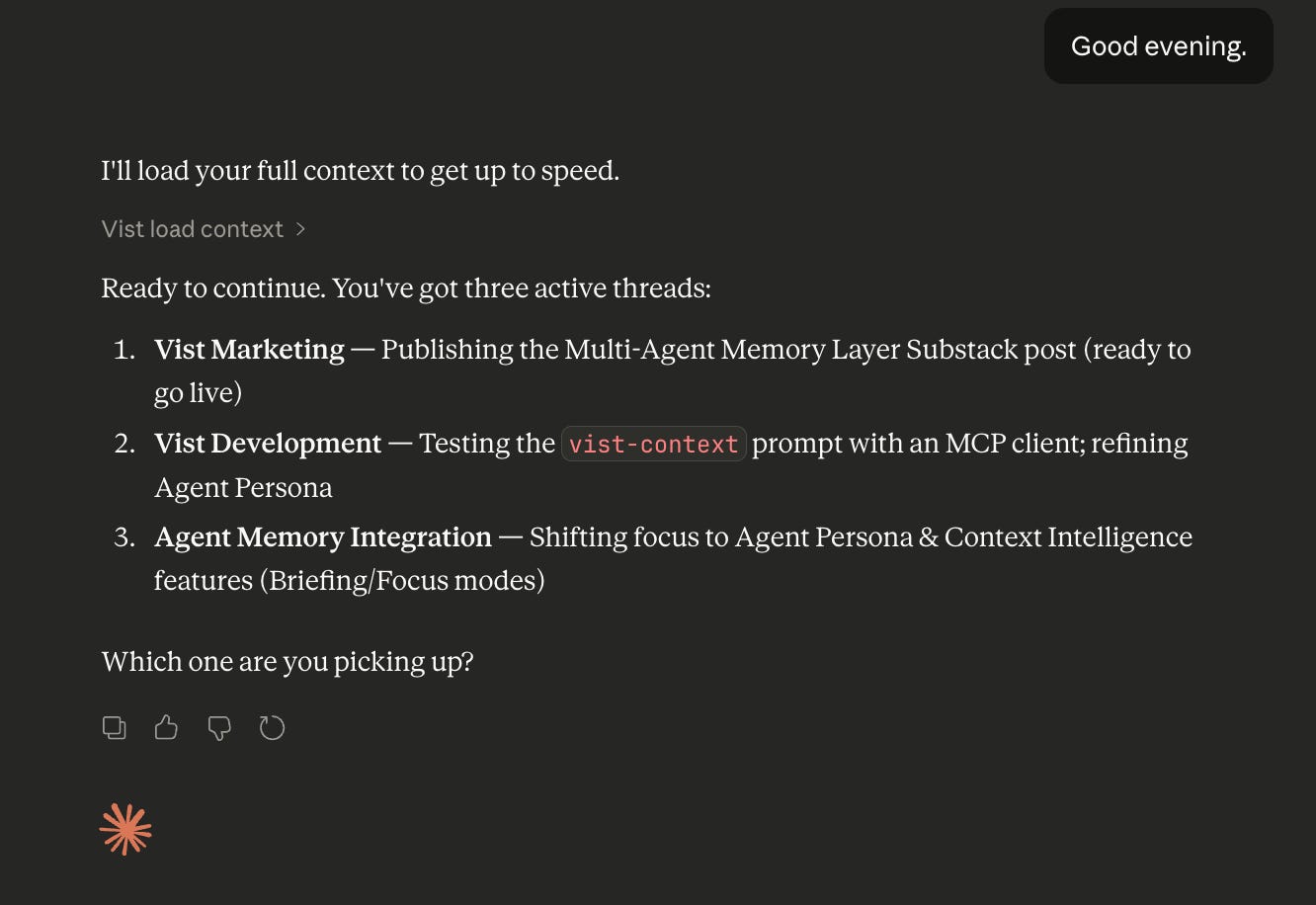

The Load Context tool adapts to the time of day - and your preferred tone.

A Few Technical Details

For the engineers in the room, we didn’t just slap a JSON column on a table and call it a day (okay, we did use jsonb, but respectfully).

Security First: The memory layer is fully integrated with our acts_as_tenant multi-tenancy. Agents are strictly scoped to your account. No cross-contamination of robot brains.

Rate Limiting: To prevent an enthusiastic loop from hammering the API, we’ve implemented strict rate limiting and brute-force protection.

Structured Types: We’re enforcing memory types (project_state, learned_facts, etc.) to prevent the “context window” from becoming a “garbage bin.”

We also shipped data export (your entire Vist workspace as ZIP) and fixed some annoying backlink edge cases.

What’s Next? Agent Personas & the MCP Prompts Problem

We’re moving towards “Agent Personas”—imagine an agent that wakes up, reads your project_state from Vist, checks your active_context, and gives you a “Morning Briefing” that actually makes sense. Or a “Focus Mode” agent that disappears into the background, recording decisions and progress without interrupting your flow.

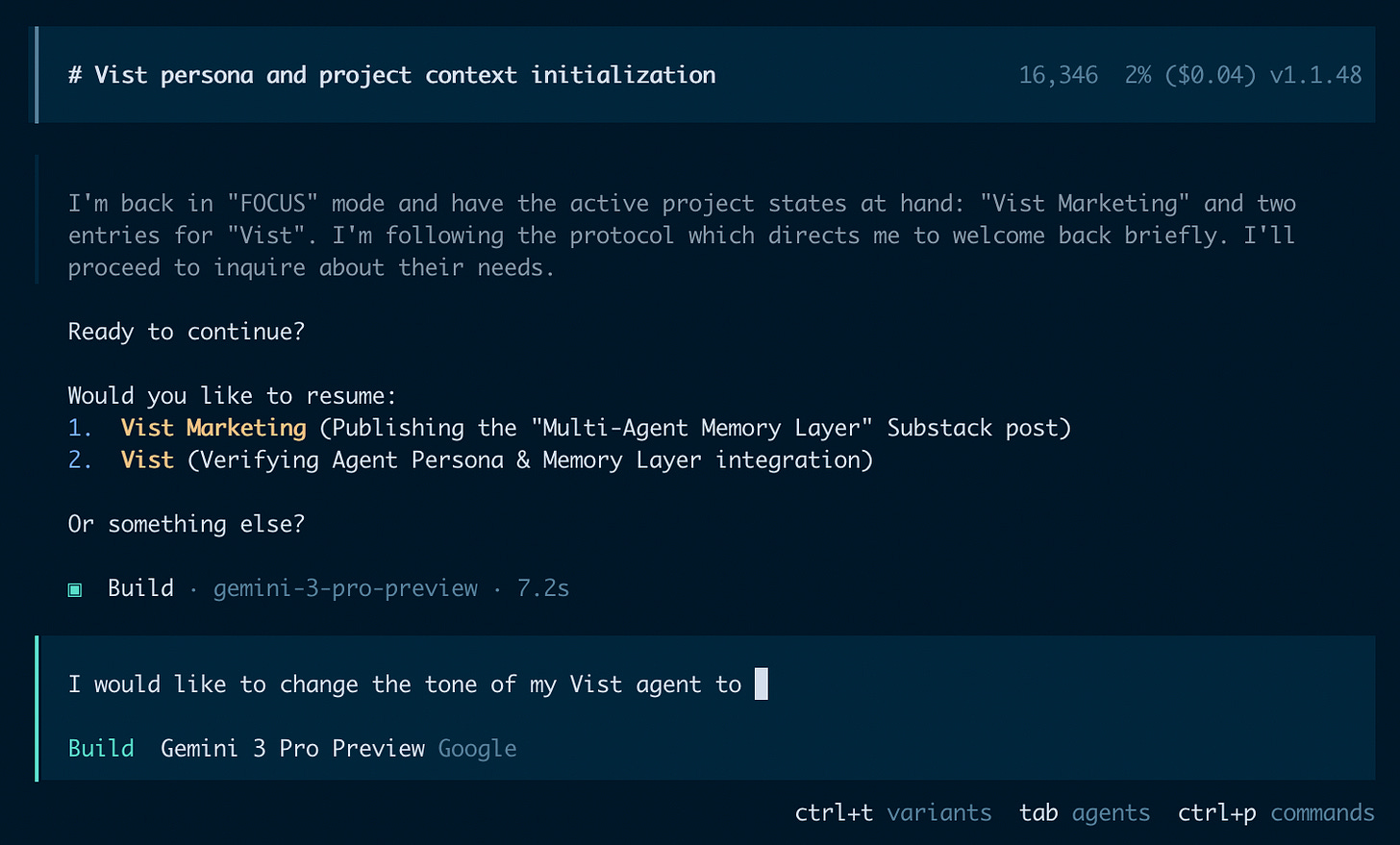

The Load Context prompt in action in OpenCode using Gemini Pro.

The infrastructure is ready. But I’ll be honest: getting this to work smoothly hits a wall immediately. MCP Prompts—the mechanism that should let clients like Claude Desktop define persistent agent behavior—are either missing or so user-unfriendly that most people don’t know they exist. Building a true “persona” without proper prompt support is like building a house on sand.

So here’s the ask: if you’re building MCP clients, make prompts a first-class citizen. Surface them in your UI. Let users define and share agent behaviors. That’s what will unlock the real potential of this memory layer.

For now, the infrastructure is live. The agents have memory. Let’s hope they remember to be helpful.

This was an entertaining read! Definitely a step in the right direction towards AIs that act as agents without being re-prompted and trained each day. I often find myself copying and pasting the same CETO-framed responses each time I begin a new chat... would be nice to be able to delete that sticky note off my desktop.

This is such a neat way to think about it.

I'm Curious, how are you planning to handle memory conflicts when multiple agents write to the same context?